- The journal Nature publishes Lam’s groundbreaking study

- Rick Gottscho and Keren Kanarik share what it means for Lam and the semiconductor industry

Over the past 150 years the most exciting and groundbreaking research has been published in the journal Nature. On April 13 (online March 8) Lam makes its mark in the world’s most prestigious scientific journal with an article entitled, “Human–Machine Collaboration for Improving Semiconductor Process Development,” co-authored by nine Lam employees.

“Our research is truly groundbreaking,” says Rick Gottscho, recent CTO of Lam. “It sets us apart as thought leaders in the application of data science to process engineering.”

The Nature article compares humans versus machines in developing a semiconductor process at the lowest cost-to-target (that is, the fewest number of experiments). To do so, the authors created a game to benchmark the performance of humans and computer algorithms for the design of a semiconductor fabrication process—high aspect ratio dielectric etch.

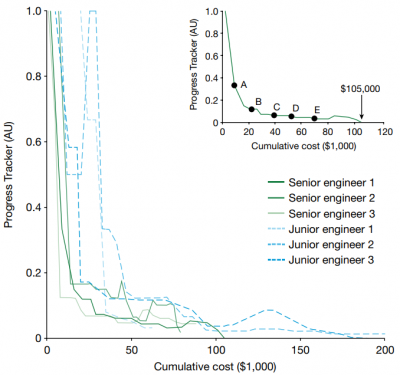

The results show that humans excel in the early stages of process development while algorithms are more cost-efficient near the tight tolerances of the target. This insight led to a “human first-computer last” approach that reduced the cost-to-target by half compared to a benchmark set by an expert process engineer with more than seven years of experience.

Why it matters: Lam now has quantifiable proof of how to use artificial intelligence in ways that could revolutionize process development in the semiconductor industry, which could save millions of dollars and countless hours.

- Read “Human–Machine Collaboration for Improving Semiconductor Process Development” by Keren Kanarik, Wojciech Osowiecki, Yu (Joe) Lu, Dipongkar Talukder, Niklas Roschewsky, Sae Na Park, Mattan Kamon, David Fried, and Richard A. Gottscho

Read on to learn about the experiment and its implications.

Avogadro’s Number

To build a chip, we need to develop the individual processes that make that chip. However, every process experiment can take minutes or hours, and then several more hours to prepare the samples for metrology (measurement). For the most challenging applications involving etching or filling high aspect ratio features, results usually arrive the next day and a whole batch can cost a few thousand dollars. Add all of this up over the course of the year and it gets very expensive and eats up a lot of time.

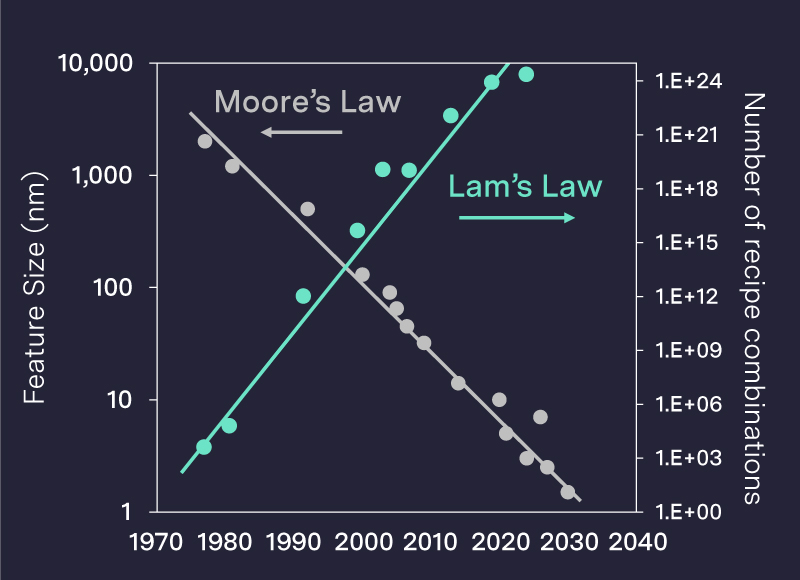

For more than a decade, Rick has highlighted this development problem via a concept he dubbed Lam’s Law (though it’s not just our problem), which refers to the increasing challenge of finding the best recipe out of all possible recipe combinations. The cheeky reference to Moore’s Law suggests the problem keeps getting worse, with the number of adjustable permutations an engineer can make when developing a wafer process exceeding 100 trillion—that's 1014—an unfathomably large number.

- As the complexity around wafer processing has become increasingly immense, the number of permutations may now be approaching 1023 (or the order of magnitude of Avogadro’s number, for the hardcore).

“We cannot afford to explore a parameter space that consists of Avogadro’s number of permutations by testing everything—it's impossible,” Rick says. “In fact, you can’t even create a data set that would be considered sufficient for big data analytics. One hundred batches of experiments costs nearly half a million dollars and takes half a year, and that is still a small number in the Big Data world.”

Virtual Game

“For years data scientists were suggesting they could build algorithms to help solve Lam’s Law and develop manufacturable processes,” Keren Kanarik recalls. She’s the paper’s lead author and technical managing director in the office of the CTO. Also—and this is crucial—she used to be a process engineer herself and feels their pain. “But how could we know the programs were better than us humans? What would we compare them to? What was the benchmark?”

That’s when Keren hit on the idea of running a game. She thought about the famous chess game between Garry Kasparov and Deep Blue and figured one of our expert process engineers could be our “Garry.” But using which process?

- It would be impractical to do this in the laboratory because it would take too long, cost too much, and there would be too much variability that could disguise the learning.

- Her boss Rick suggested making the game virtual, so that as many players as wanted could play on the same process as many times as needed.

- After a prototype in 2019 and a more sophisticated version created over three months in 2020– a virtual etch process was born.

The virtual process made it possible to play a game that pitted humans against algorithms to evaluate one algorithm versus another, and algorithms against people.

“The virtual environment gives us absolute control and gives us a way to evaluate in a systematic way, allowing us to do these evaluations,” Keren explains.

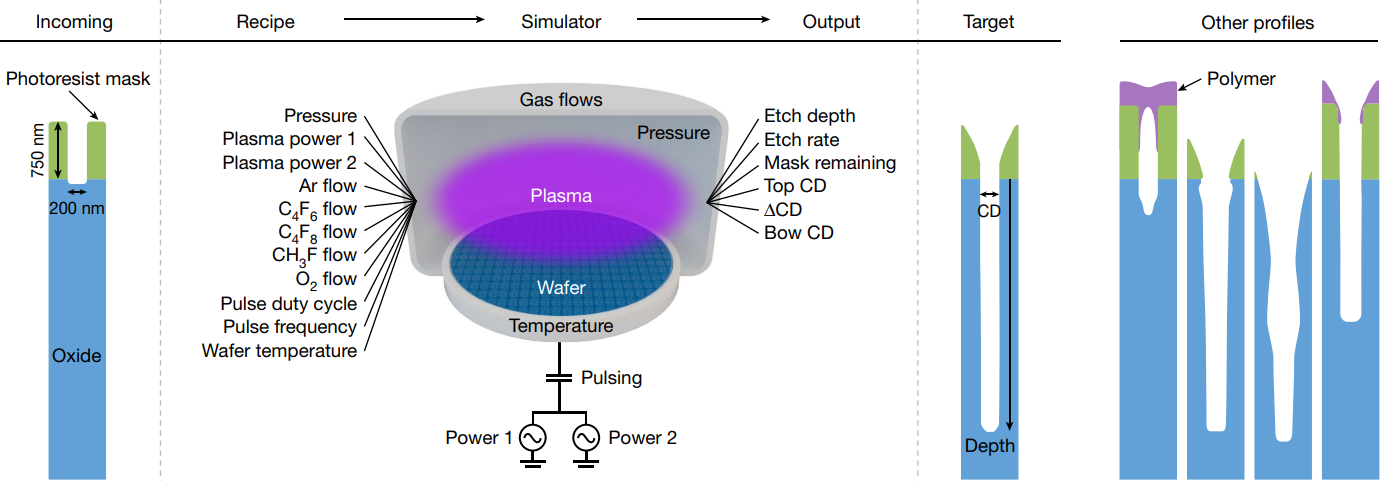

Schematic of the virtual process used in the game. The input of the virtual process is a “recipe” that controls the plasma interactions with a silicon wafer. For a given recipe, the simulator outputs metrics along with a cross-sectional image of a profile on the wafer. The target profile is shown along with examples of other profiles that do not meet the target. The goal of the game is to find a suitable recipe at the lowest cost-to-target. CD, critical dimension.

Lam Wins

Lam's study confirmed that machines alone cannot yet do what an expert process engineer can do. If the machines and data scientists who write their algorithms are not given any domain knowledge from the expert process engineer, they’re nowhere close to beating humans. In other words, as far as dialing into the right recipe for wafer processing, human beings are still essential.

But the computer algorithms could—and finally did—win when partnered with the humans under certain circumstances.

The trajectories are monitored by the Progress Tracker as defined in Methods. The target is met when the Progress Tracker is 0. Trajectories of senior engineers are in green and junior engineers in blue. The trajectory of the winning expert (senior engineer 1) is highlighted in the inset, showing transfer points A to E used in the HF–CL strategy. AU, arbitrary units.

The results of Lam’s study point to a path for substantially reducing cost-to-target by combining human and computer advantages. In doing so, the authors conclude, “we will accelerate a critical link in the semiconductor ecosystem, using the very computing power that these semiconductor processes enable. In effect, AI will help create itself—akin to the famous M.C. Escher circular graphic of two hands drawing each other.”

Related Articles

- Human–Machine Collaboration for Improving Semiconductor Process Development (Nature)

- The Semiverse Is a Paradigm Shift in How We Speed Up Innovation (Lam's Blog)

- On Rick Gottscho’s talk “Big Problems in a Little Data World” (Semi Engineering)